Post-Quantum AI Infrastructure Security: A Complete Guide for 2026

The "wait-and-see" era for quantum-resistant security is officially dead. If you’re still treating quantum threats like some sci-fi plot meant for the 2040s, you’re already behind.

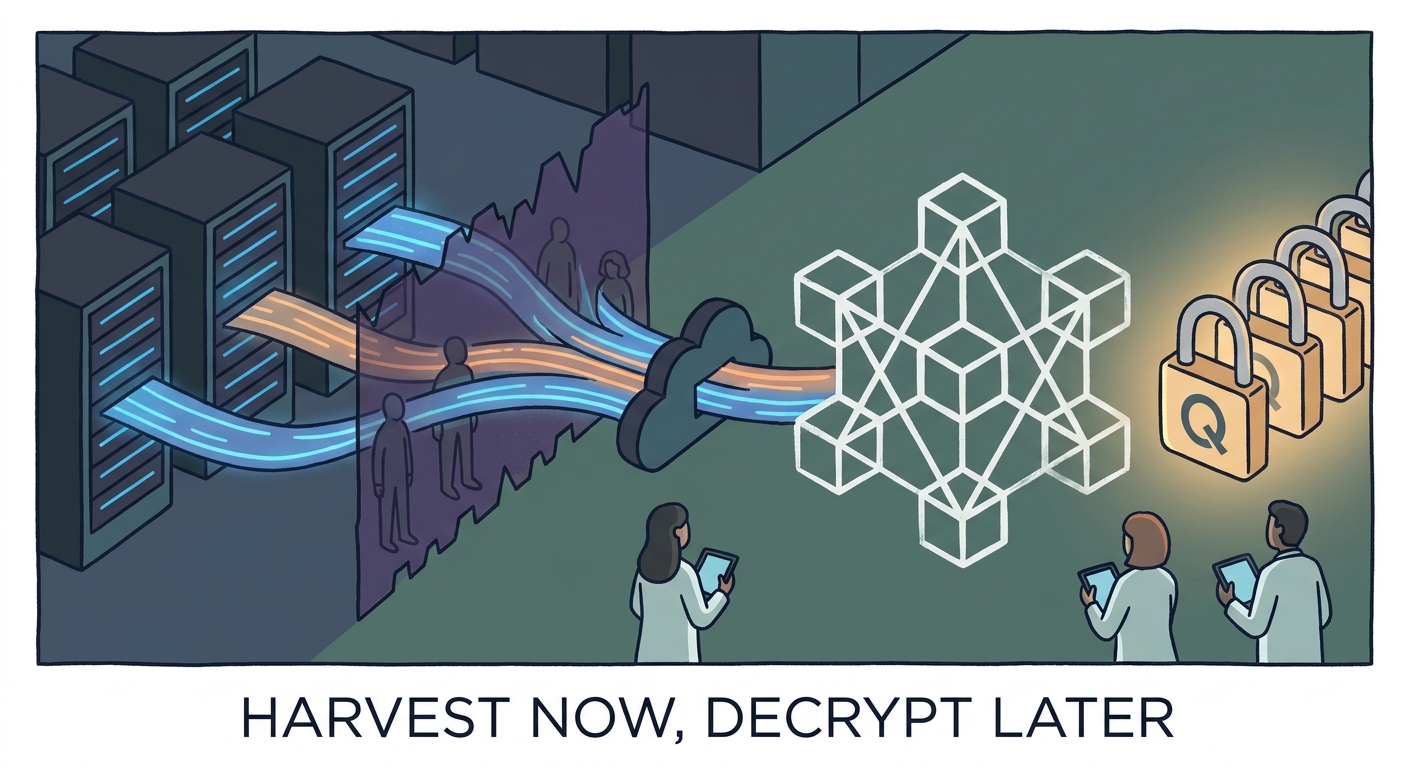

By 2026, enterprise AI isn't just a pilot program; it’s the backbone of your operations. But there’s a catch. The "Harvest Now, Decrypt Later" (HNDL) strategy—where attackers hoover up your encrypted traffic today, waiting for the hardware to crack it tomorrow—has moved from a fringe theory to a board-level liability. If your infrastructure isn't already pivoting to post-quantum standards, you’re handing your most sensitive data to future adversaries on a silver platter.

To survive this reality, you need to follow The 2026 Roadmap to Post-Quantum AI Infrastructure Security. Today’s competitive edge can turn into tomorrow’s catastrophic breach faster than you think. Let’s fix that.

The New Threat Vector: Agentic AI and MCP Sprawl

We’re living in the age of the autonomous agent, yet our security perimeters are still stuck in the early 2010s. We’re guarding the front door while the back door is wide open.

The Model Context Protocol (MCP) is the industry’s darling right now. It makes connecting AI models to external data seamless. But it’s also the ultimate "Shadow IT" vector. As noted in the Model Context Protocol (MCP) Official Documentation, these servers act as bridges, allowing LLMs to query databases, file systems, and internal APIs in real-time.

The problem? These pipelines are often invisible to traditional perimeter security. A developer spins up a local MCP server to give an agent access to a private documentation store, and—poof—they’ve punched a hole in the firewall. Often, this hole lacks basic authentication or encryption.

This is the breeding ground for "Tool Poisoning." An attacker doesn't need to hack the model itself; they just need to compromise the MCP connection. From there, they feed the agent malicious instructions or poisoned data. The agent, thinking it’s doing its job, executes those instructions with high-privilege access. It’s like giving the keys to the vault to an employee who hasn't been vetted, just because they’re wearing the right uniform.

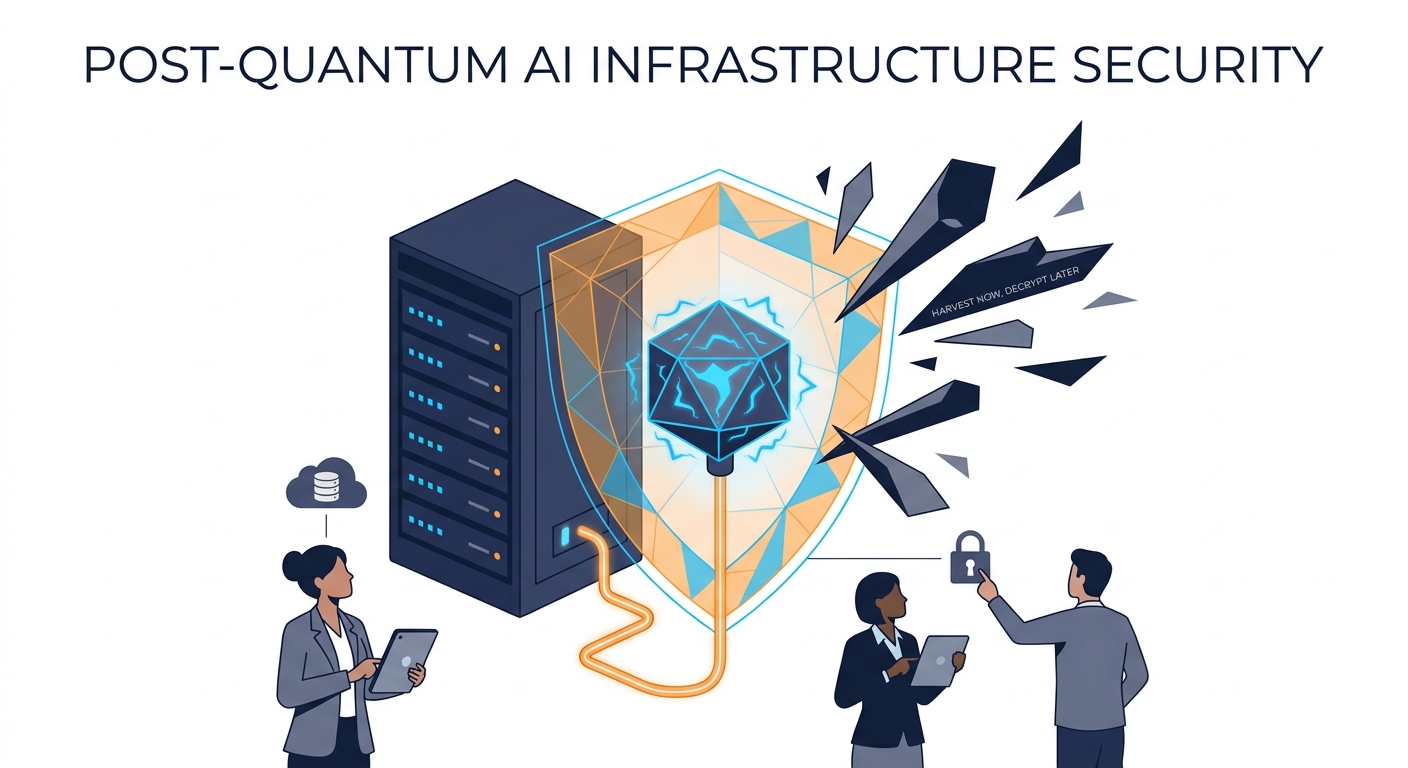

Visualizing the Modern AI Attack Surface

Modern AI architecture isn't a closed loop anymore. It’s a messy, sprawling web. Your "trust boundary" is shifting every time an agent fires off a query. Here is what the interaction between your users, agents, and data servers looks like in a high-risk environment:

As you can see, the MCP layer is your make-or-break point. Without enforced PQC (Post-Quantum Cryptography) and zero-trust verification at this nexus, your AI agent is effectively a blind, high-speed executor of potentially hostile commands.

Is Your Infrastructure Ready for NIST-Approved PQC?

Most people hear "quantum-resistant encryption" and think, Great, another massive rip-and-replace project. It doesn't have to be that way. The NIST Post-Quantum Cryptography Standardization project has actually handed us a map.

Algorithms like CRYSTALS-Kyber for key encapsulation and Dilithium for digital signatures aren't theoretical anymore. They’re ready for the real world.

The strategy for 2026? Stop waiting for a total hardware refresh. Focus on crypto-agility instead. By wrapping your existing TLS 1.3 traffic in hybrid PQC schemes, you can keep legacy systems happy while ensuring your data remains gibberish to quantum computers. It’s about building a secondary line of defense that doesn't break the first one.

The Strategy for Quantum-Resistant AI Defense

Securing an AI-driven environment is a balancing act. You need speed, but you can’t sacrifice rigor. Here is your three-step game plan:

Step 1: Discovery & Inventory You can’t protect what you can’t see. Security teams need to map every single active MCP connection. If an agent is talking to a data source, you better know exactly what that source is, what privileges it holds, and which version of the protocol it’s using. If you can’t audit it, shut it down.

Step 2: Crypto-Agility Implement hybrid models. Layer PQC alongside your current classical cryptographic primitives. If a vulnerability is found in a specific PQC implementation—and it will happen—your fallback, classical encryption still stands. This is the only way to avoid the "quantum trap" of being locked into a single, potentially obsolete standard.

Step 3: Hardware-Level Protection Software encryption is great, but it’s not enough if your models are running on GPUs vulnerable to side-channel attacks. You need to look into Secure Enclaves for Post-Quantum AI Model Execution. This keeps your model weights and inference data encrypted even while they’re being processed, providing a crucial layer of protection against both current and future threats.

Monitoring for Malicious Activity in Quantum-Era Streams

Let’s be honest: signature-based detection is dead. It’s an antique. In a world where AI agents can generate unique, polymorphic attack vectors at machine speed, your monitoring stack needs to be smarter.

You need AI-Driven Anomaly Detection in Post-Quantum Context Streams. Your system should be able to spot when an MCP connection starts acting "weird."

If an agent suddenly wants to touch a data table it’s never looked at before, or if the context window length spikes for no reason, your security system should kill the connection automatically. This isn't just about blocking bad guys; it's about establishing a behavioral baseline for every single agent-tool interaction.

The Regulatory Landscape: What Do You Need to Know?

Regulators are waking up. The CISA Quantum Readiness guidelines make it clear: if you’re in critical infrastructure, the transition isn't optional. It’s a mandate.

For the private sector, this is a loud signal. Compliance frameworks are pivoting. Auditors aren't just checking if you use encryption anymore; they’re checking if your encryption can stand up to a quantum computer. If you can’t demonstrate that, you’re a liability. Building a framework that handles both AI autonomy and quantum readiness is the new gold standard for risk management.

Conclusion: Beyond Q-Day

"Q-Day"—the moment quantum computers break our current asymmetric encryption—isn't a distant, speculative threat. It’s a deadline. 2026 is your final window to move from reactive patching to a proactive, quantum-resistant architecture.

The convergence of AI and quantum computing has leveled the playing field for attackers. Your security posture needs to be continuous, automated, and agnostic to whatever hardware is running underneath. Don't be the organization that gets caught off guard. Start auditing those MCP connections today and begin the move toward hybrid, quantum-resistant encryption. The future of your data is literally on the line.

Frequently Asked Questions

Why is 2026 considered a critical year for post-quantum security?

2026 is the year NIST standards move from "recommended" to "required" in many circles. It’s when the "Harvest Now, Decrypt Later" threat moves from a tech-bro talking point to a genuine business risk. If you don't start migrating now, you are effectively leaving your long-term secrets exposed.

How does Model Context Protocol (MCP) increase our infrastructure risk?

MCP creates a high-privilege bridge between AI agents and your internal data. Because many developers deploy MCP connections quickly—often without telling the security team—these connections become invisible "Shadow IT" pathways that bypass traditional firewalls.

Do I need to replace all my current encryption to be quantum-resistant?

No. That’s a recipe for disaster. The best practice is "crypto-agility." Use hybrid schemes that wrap PQC algorithms around your existing standards. This keeps your current systems running while adding a layer of quantum defense, allowing for a phased, safer migration.

What are the biggest threats to AI agents in 2026?

"Tool Poisoning" and prompt injection are the big ones. Attackers manipulate the data or instructions fed to an agent via MCP connections, causing the agent to do things it shouldn't—like exfiltrating data or bypassing security—all while appearing to act on legitimate, authorized commands.